Sharpa and NVIDIA Push Robotics Training into a New Era of Dexterity

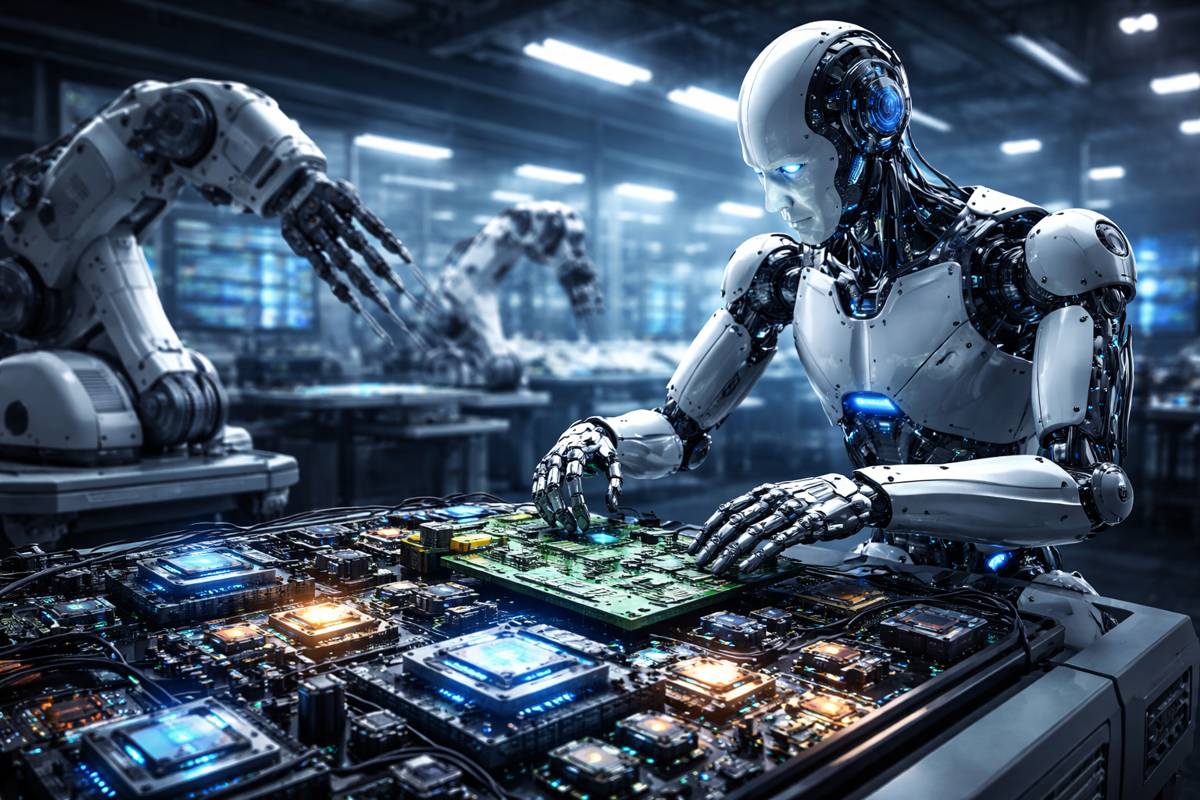

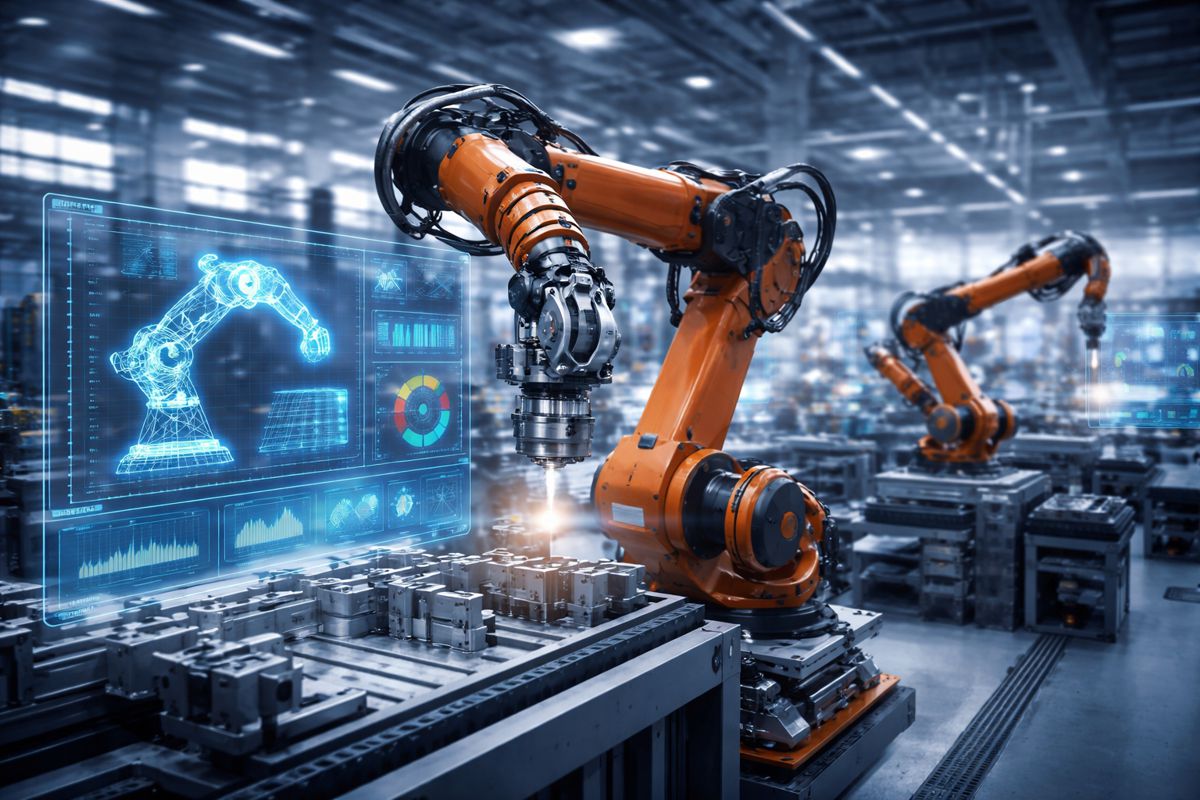

The global race to deploy intelligent robots capable of complex physical tasks is accelerating rapidly. While industrial automation has been common for decades, truly dexterous robots that can manipulate objects with human-like precision remain rare. Now, emerging research from robotics company Sharpa, developed in collaboration with NVIDIA, suggests that the gap between human skill and robotic capability may be narrowing far faster than many expected.

By combining advanced simulation techniques, tactile sensing and large-scale video training data, the partnership is addressing one of robotics’ most persistent challenges: teaching machines to interact reliably with the messy, unpredictable physical world. The research demonstrates measurable improvements in how robots learn manipulation tasks, potentially opening the door to large-scale deployment across manufacturing, logistics, healthcare support and consumer environments.

The work also signals a broader shift in the robotics ecosystem. Instead of relying solely on physical training and costly hardware testing, engineers are increasingly turning to sophisticated digital environments and AI-driven models to accelerate robot learning. Sharpa’s approach illustrates how simulation, tactile intelligence and large-scale data can converge to build machines capable of operating with far greater autonomy.

The Simulation Bottleneck in Robotics Development

Training robots to perform manipulation tasks has historically been one of the slowest and most expensive stages of robotics development. Unlike software systems, robots must interact with real objects, surfaces and forces. Even minor variations in weight, friction or positioning can cause a task to fail.

Traditionally, researchers have relied on two main approaches. The first involves physical training on real robots, which produces highly accurate results but requires enormous time, hardware wear and engineering supervision. The second relies on simulated environments where robots can practice tasks millions of times digitally.

Simulation offers clear advantages. Reinforcement learning algorithms can iterate rapidly, allowing robots to experiment with countless strategies. However, simulation has long faced a fundamental compromise between realism and computational efficiency.

Highly detailed physical simulations accurately represent contact forces, materials and tactile feedback, but they are extremely computationally expensive. Simpler simulations run quickly but fail to capture the subtle physics that manipulation tasks require.

This gap between simulation and reality, often called the “sim-to-real problem,” has slowed progress in dexterous robotics for years. Engineers frequently discover that policies trained in simulation fail once transferred to real machines.

Sharpa’s latest research attempts to address this long-standing obstacle.

Tacmap A New Simulation Framework for Dexterous Robotics

Sharpa’s collaboration with NVIDIA centres on a simulation framework known as Tacmap. The system introduces a shared high-fidelity geometric representation that allows tactile simulation and physical interaction modelling to operate with both accuracy and speed.

Rather than forcing developers to choose between detailed physics or rapid computation, Tacmap aims to deliver both simultaneously. By aligning simulation geometry with tactile sensor modelling, the framework enables robots to train on interactions that more closely resemble real-world contact.

This development is significant because tactile perception plays a crucial role in dexterous manipulation. Humans rely heavily on touch when grasping, assembling or adjusting objects. Robotic systems must replicate this feedback to perform similar tasks reliably.

Sharpa’s simulation framework integrates tactile sensing directly into the learning process, allowing robots to understand how objects feel as well as how they look. This approach supports the training of its Vision Tactile Language Model architecture, which combines visual perception, tactile signals and language-based reasoning.

Importantly, Sharpa has announced that the simulation assets and code will be released as open source, allowing researchers and developers across the robotics community to build upon the framework.

Alicia Veneziani, Global Vice President of Go To Market and President of Europe at Sharpa, emphasised the broader significance of the collaboration: “This collaboration strengthens the foundation for training in simulation, advancing the robotics field towards more dexterity and autonomy and accelerating large-scale deployment.”

By making the tools accessible, Sharpa and NVIDIA are effectively contributing infrastructure to the wider robotics ecosystem.

Video Data and the Rise of Data Efficient Robot Learning

Alongside simulation improvements, Sharpa’s hardware has also been used in a separate research effort focused on data-efficient learning.

Researchers from NVIDIA’s GEAR Lab recently explored how robots could learn manipulation tasks using policies derived from large-scale video datasets. The work builds on the GR00T model, a robotics foundation model trained on more than 20,000 hours of human activity footage.

Foundation models are becoming increasingly important in robotics, much like they have in language AI. Instead of learning tasks from scratch, robots can inherit general knowledge about movement and behaviour from massive datasets.

In this research, the learned policies were transferred to physical robots equipped with Sharpa’s Wave robotic hands. The robots were then tasked with performing detailed manipulation activities such as assembling model cars, handling syringes and sorting playing cards.

The results were striking. Robots equipped with the Wave hand achieved a 54 percent higher success rate in completing tasks compared with baseline approaches.

This suggests that combining large-scale video learning with highly dexterous robotic hardware can dramatically improve real-world performance.

The results also demonstrate the importance of anthropomorphic robot design. Human-like hands, combined with tactile sensing, allow robots to apply skills learned from human behaviour far more effectively than traditional industrial grippers.

Sharpa’s Dexterity First Robotics Platform

Sharpa’s broader robotics platform reflects a deliberate focus on dexterity as the central challenge of robotics development. While many robotics companies concentrate on mobility or perception, Sharpa is building systems specifically designed to handle complex manipulation tasks.

The company’s hardware and software stack includes several key components.

North, a general-purpose humanoid robot, integrates whole-body control with fine loco-manipulation capabilities. This enables the robot to combine movement and hand-based tasks, similar to how humans coordinate arms, hands and body posture.

Wave, Sharpa’s flagship robotic hand, incorporates 22 active degrees of freedom along with tactile sensors. This design allows the hand to replicate many of the subtle finger movements used in human manipulation.

Such dexterity is critical for tasks involving delicate objects or intricate assembly processes. Industries ranging from electronics manufacturing to healthcare assistance require robotic systems capable of precise interaction.

Completing the platform is CraftNet, Sharpa’s Vision Tactile Language Architecture. The system integrates multiple AI components designed to interpret visual information, tactile signals and motion planning simultaneously.

CraftNet includes two functional layers described as the Motion Brain and the Interaction Brain. The Motion Brain directs overall movement, while the Interaction Brain governs physical contact with objects.

Together, these systems enable millimetre-level precision when interacting with real-world environments.

The architecture also maps tactile signals onto data captured from human demonstrations, including video recordings and glove-based motion capture. This process significantly improves the efficiency of training datasets.

Robotics Enters the Foundation Model Era

Sharpa’s research reflects a wider transformation taking place across the robotics industry. Over the past few years, robotics has begun to adopt the same large-scale AI training methods that revolutionised language and image recognition.

Major technology companies are now investing heavily in robotics foundation models. These systems combine massive datasets with simulation and real-world experimentation to create general-purpose robotic intelligence.

NVIDIA, for instance, has been building a comprehensive robotics ecosystem that includes the Isaac simulation platform, the GR00T robotics foundation model and dedicated hardware for accelerated AI computation.

Other organisations are pursuing similar approaches. Research initiatives at institutions such as MIT, Stanford and DeepMind are exploring how robots can learn from human video data, language instructions and shared datasets.

The long-term objective is to create robots capable of learning thousands of tasks rather than being programmed for a single specialised role.

Such systems could transform sectors ranging from construction and infrastructure maintenance to logistics, manufacturing and emergency response.

For industries struggling with labour shortages and safety concerns, the ability to deploy adaptable robotic workers could become a major productivity driver.

Global Robotics Investment Accelerates

The robotics sector has experienced rapid investment growth over the past decade. According to the International Federation of Robotics, more than half a million industrial robots are installed globally each year, with Asia accounting for the largest share.

However, most existing robots remain limited to structured environments such as automotive manufacturing lines. Deploying robots in dynamic settings like warehouses, construction sites or healthcare environments requires far greater levels of dexterity and decision-making.

This is precisely the problem Sharpa aims to solve.

Founded in 2024, the company has quickly established itself within the AI robotics landscape. Its headquarters in Singapore position it within one of Asia’s fastest growing technology ecosystems, while engineering operations in Shanghai support manufacturing development. Meanwhile, business operations in Mountain View connect the company to Silicon Valley’s AI research community.

Sharpa has already received recognition for its design and technological innovation, including the iF Product Design Award and the CES Innovation Award in 2026.

Participation in NVIDIA’s Inception programme further integrates the company into a global network of AI startups and research partners.

A Glimpse of the Next Generation of Robots

Sharpa plans to showcase its full robotics stack at NVIDIA’s GTC 2026 event, demonstrating how tactile intelligence and dexterous hardware can work together to accelerate robot deployment.

The demonstration will highlight the combination of human-like robotic hands and advanced AI models capable of interpreting both visual and tactile data simultaneously.

If the underlying research continues to deliver improvements in reliability and training efficiency, such technologies could dramatically shorten the timeline for widespread robotic adoption.

For construction, infrastructure and industrial sectors, this development could eventually reshape the nature of work. Robots capable of precise manipulation could assist with equipment assembly, component installation, hazardous inspection tasks and maintenance operations in difficult environments.

While fully autonomous humanoid robots remain a long-term goal, advances in simulation training and dexterity suggest the industry is moving steadily toward that future.

Sharpa’s work with NVIDIA represents another step along that path, demonstrating that the next generation of robots may learn their skills not only through hardware testing but through vast digital worlds built to mirror reality.

As robotics continues to converge with artificial intelligence, the boundary between simulation and physical capability is beginning to blur. And when that boundary finally disappears, the age of truly dexterous machines may arrive sooner than expected.