Vision AI Powers Humanoid Robot Safety in the Real-World

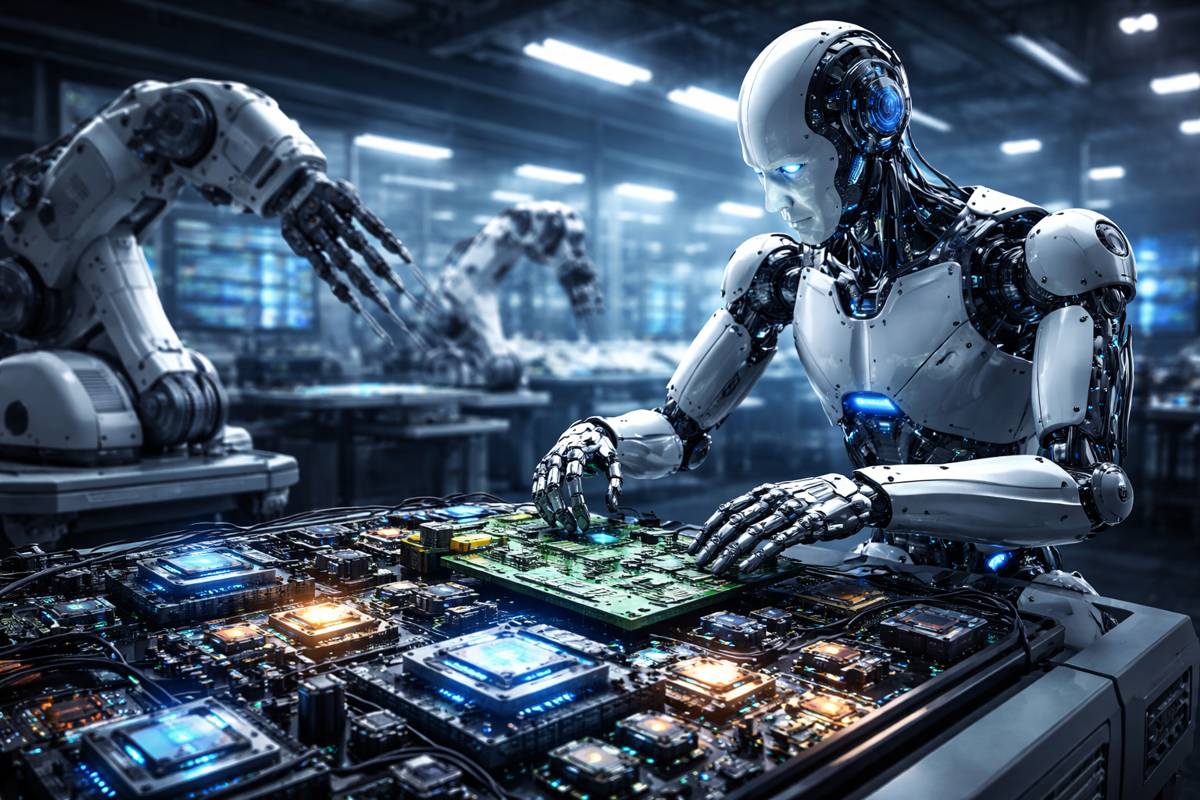

In the race to bring humanoid and legged robots into real-world environments, performance is no longer the only benchmark that matters. Increasingly, the conversation has shifted toward safety, predictability and trust. As robots move out of controlled labs and onto construction sites, logistics hubs and urban infrastructure projects, their ability to “see” and interpret complex environments has become mission critical.

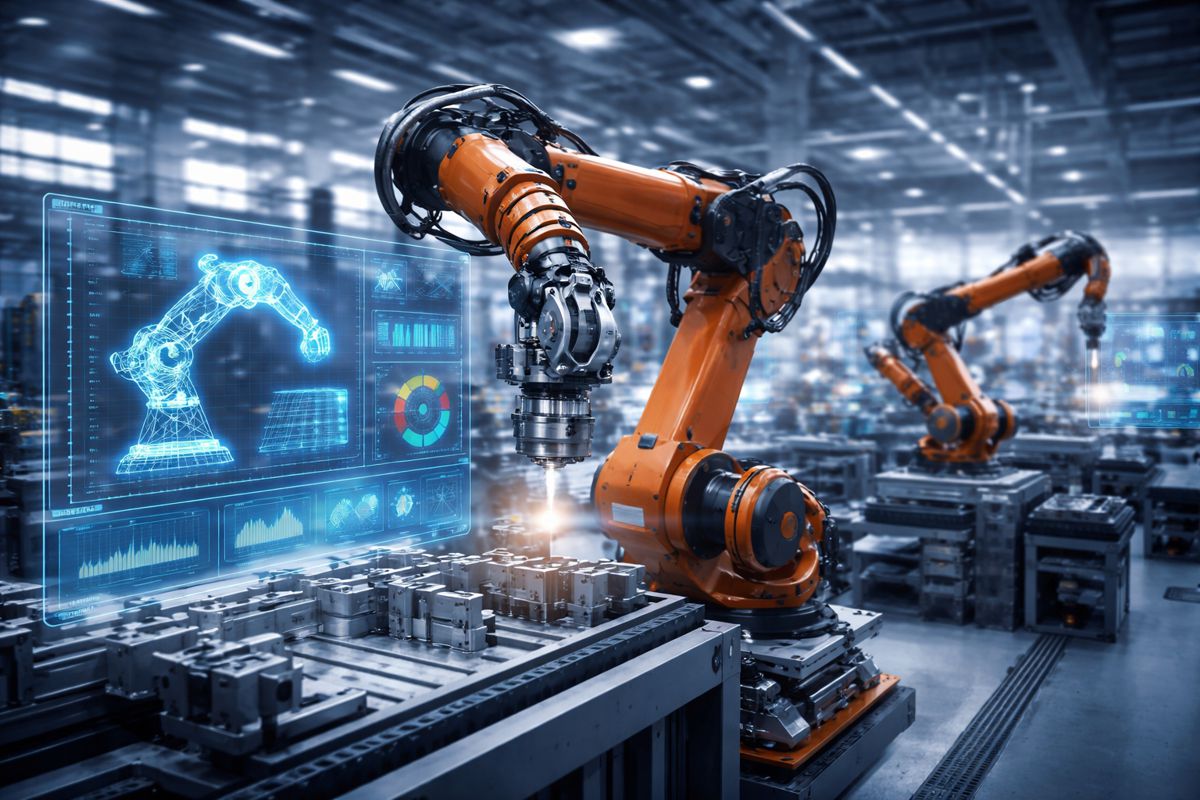

At the heart of this transformation lies perception technology. Cameras, depth sensors and AI-driven reasoning systems are no longer passive components. They function as a robot’s visual cortex, enabling machines to understand space, anticipate movement and operate safely alongside people. In infrastructure environments, where unpredictability is the norm rather than the exception, that capability is essential.

This shift is particularly relevant for construction and industrial sectors, where automation is advancing rapidly but safety standards remain uncompromising. According to the International Federation of Robotics, global installations of professional service robots continue to grow year on year, especially in logistics, inspection and construction. Yet widespread adoption hinges on one factor above all others: whether robots can operate safely in dynamic, human-centred environments.

A New Benchmark for Autonomous Navigation at NVIDIA GTC

At NVIDIA GTC, a significant milestone in robotics perception has been demonstrated through a collaboration between RealSense, LimX Dynamics and NVIDIA.

The demonstration showcases autonomous humanoid navigation powered by dense 3D depth perception, Visual SLAM and advanced visual odometry. By integrating RealSense depth cameras with NVIDIA’s cuVSLAM and visual odometry stack, the system enables robots to localise, map and navigate in real time without human intervention.

This is more than a technical showcase. It signals a shift in how humanoid robots can be deployed across industries. Instead of relying on remote operators or pre-programmed paths, robots are beginning to interpret their surroundings independently, adjusting their movements based on real-world conditions.

For infrastructure operators, this has profound implications. Autonomous inspection robots, for example, could navigate complex construction zones, identify hazards and adapt to changing site conditions without constant supervision. Similarly, logistics robots could operate safely in mixed environments where people, vehicles and materials interact continuously.

Bridging the Gap Between Simulation and Reality

One of the longstanding challenges in robotics has been the so-called “sim-to-real” gap. Training robots in simulation is efficient and scalable, but translating that learning into reliable real-world behaviour has often proven difficult.

To address this, the development of the humanoid navigation system was accelerated using NVIDIA Isaac Lab. This high-fidelity simulation environment allowed engineers to train reinforcement learning models under realistic conditions before deploying them in physical systems.

The advantage of this approach is twofold. First, it reduces the risks associated with real-world testing, particularly in safety-critical environments. Second, it enables faster iteration, allowing developers to refine behaviours such as balance, obstacle avoidance and terrain adaptation without the constraints of physical hardware.

As a result, the humanoid robot demonstrated at GTC exhibits stable and predictable motion, even in complex three-dimensional environments. This level of reliability is essential for infrastructure applications, where even minor errors can have significant safety and financial consequences.

Moving Beyond Flat Surfaces to True 3D Mobility

Wheeled robots have dominated automation in recent years, largely because they operate on predictable, flat surfaces. From warehouse robots to autonomous cleaning systems, their limitations have been acceptable within controlled environments.

However, infrastructure projects rarely offer such simplicity. Construction sites, transport hubs and urban environments are inherently three-dimensional, with uneven terrain, stairs, elevation changes and dynamic obstacles.

Humanoid and quadruped robots are designed to address these challenges, but their complexity introduces new risks. Unlike wheeled systems, they must constantly adjust their balance, foot placement and trajectory in response to changing conditions.

Traditional sensing technologies such as 2D LiDAR and encoder-based odometry fall short in these scenarios. They lack the depth awareness needed to interpret complex terrain or anticipate hazards effectively. This has historically limited the deployment of legged robots, forcing operators to rely on teleoperation or tightly controlled environments.

The integration of dense 3D perception changes that equation. By capturing detailed spatial information, robots can understand not just where objects are, but how they relate to the environment in three dimensions. This enables safer navigation across stairs, curbs and uneven surfaces, as well as more effective interaction with dynamic elements such as moving equipment or personnel.

Enabling Predictable and Human-Centric Robot Behaviour

Safety in robotics is not just about avoiding collisions. It is also about predictability. Humans need to understand how robots will behave in order to trust and work alongside them.

The perception stack demonstrated at GTC addresses this by enabling smoother, more human-readable motion. Instead of abrupt stops or erratic corrections, robots can move in a controlled and consistent manner, adapting gradually to changes in their environment.

This is particularly important in shared workspaces. On construction sites, for instance, workers must be able to anticipate the movements of autonomous equipment to maintain safe distances and coordinate tasks effectively. Predictable robot behaviour reduces cognitive load and enhances overall site safety.

“Humanoids operate in three dimensions, alongside people, in environments that are constantly changing,” said Nadav Orbach, CEO of RealSense. “If robots are going to work safely beside humans, perception carries responsibility beyond raw sensors. It must function as the robot’s visual cortex, enabling accurate localization, collision avoidance, terrain understanding and stable, predictable motion in unstructured environments.”

In practical terms, this translates into several key capabilities:

- Continuous localisation and mapping of the surrounding environment

- Real-time collision avoidance with people and moving objects

- Stable locomotion across complex terrain

- Adaptive path planning in dynamic conditions

Together, these capabilities form the foundation of responsible autonomy, a concept that is gaining traction across the robotics industry as deployment scales.

Unlocking New Use Cases Across Construction and Infrastructure

The implications of advanced perception extend far beyond laboratory demonstrations. In construction, robots equipped with dense 3D vision could perform site inspections, monitor progress and identify safety risks in real time.

In transport infrastructure, autonomous systems could be deployed for maintenance tasks such as bridge inspections, tunnel surveys and rail corridor monitoring. These environments are often hazardous for human workers, making them ideal candidates for robotic intervention.

Logistics and warehousing also stand to benefit. As supply chains become more complex, the ability to deploy robots in mixed environments where humans and machines coexist is increasingly valuable. Advanced perception enables robots to navigate these spaces safely, improving efficiency without compromising safety.

Moreover, the integration of AI-driven perception with digital twins and Building Information Modelling is opening new possibilities. Robots could use real-time sensor data to update digital models of infrastructure assets, providing operators with accurate and up-to-date information for decision-making.

RealSense and the Evolution of the Robotics Ecosystem

The demonstration at GTC also highlights the broader role of RealSense within the robotics ecosystem. With more than a decade of experience in depth sensing technology, the company has developed a mature hardware and software platform that supports a wide range of applications.

Its active stereo depth technology, optimised for close and mid-range sensing, is particularly well suited to indoor and semi-structured environments. Combined with a robust SDK, it enables developers to prototype and scale robotic solutions more efficiently.

As the industry moves toward greater autonomy, the importance of such platforms cannot be overstated. Standardised, reliable perception systems reduce development complexity and accelerate time to market, allowing companies to focus on higher-level capabilities such as decision-making and task execution.

At the same time, partnerships with companies like NVIDIA and LimX Dynamics demonstrate the value of ecosystem collaboration. By integrating complementary technologies, developers can create more capable and reliable systems than would be possible in isolation.

A Safer Path Forward for Humanoid Robotics

The transition from experimental prototypes to practical deployment is rarely straightforward, particularly in safety-critical industries such as construction and infrastructure. However, advances in perception technology are bringing that transition within reach.

By enabling robots to understand and navigate complex environments with a high degree of accuracy, systems like those demonstrated at GTC are addressing one of the key barriers to adoption. They are not just improving performance, they are redefining what it means for robots to operate safely alongside humans.

As humanoid robotics continues to evolve, perception will remain at the centre of the conversation. It is the foundation upon which autonomy, safety and trust are built. And as this latest demonstration shows, the industry is moving steadily closer to a future where robots are not just tools, but reliable partners in the delivery of infrastructure and industrial operations.