A Unified Architecture for the Autonomous Vehicle Era

Autonomous vehicle development has never really been limited by ambition. Instead, it has been constrained by economics. Over the past decade, most programmes have relied on costly sensor stacks, high definition mapping campaigns and millions of kilometres of training data. The result has been technical progress paired with uncertain commercial viability, particularly for passenger vehicles intended for mass production rather than small robotaxi fleets.

Helm.ai’s latest expansion of its Driver software platform lands directly in that gap. Rather than treating Level 2 assistance, Level 3 conditional autonomy and Level 4 driverless operation as separate engineering problems, the company positions them as stages of a single software lifecycle. The same architecture, deployed today in a supervised assistance configuration, is intended to evolve into certified eyes off autonomy as hardware capability and regulatory approval mature.

This matters to manufacturers because automotive development cycles span many years while safety certification frameworks move slowly. A platform that can start generating value immediately and then expand capability without replacement changes the financial equation. Carmakers no longer need parallel programmes for driver assistance and autonomy. They can deploy once and scale forward.

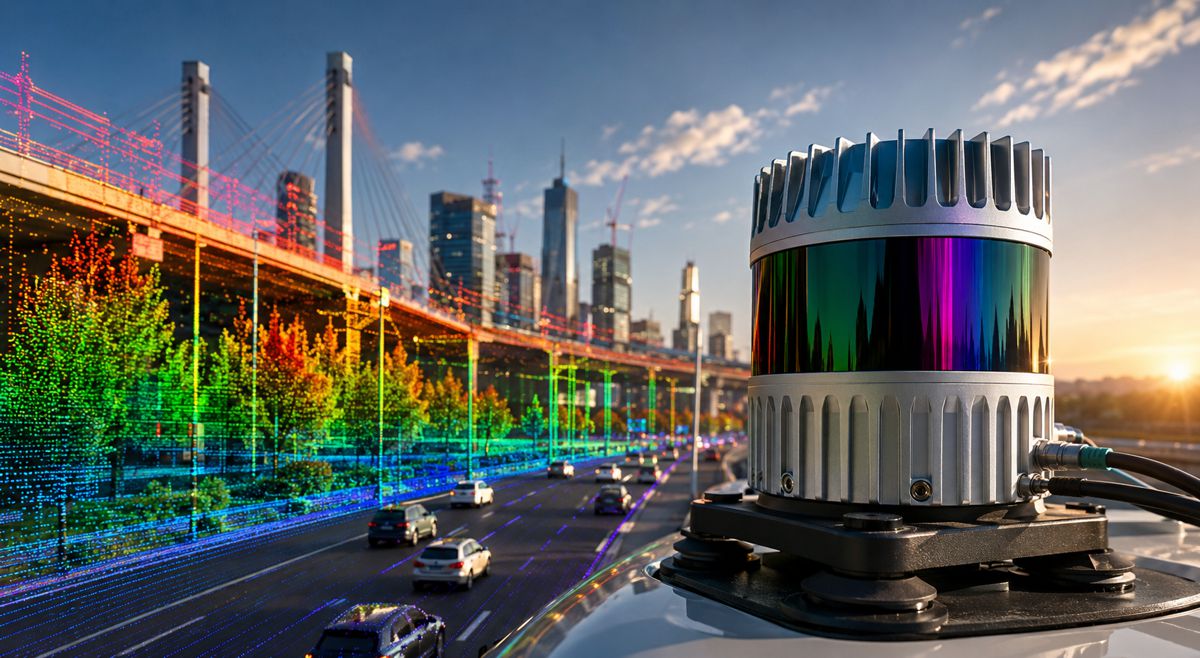

Why Vision Only Systems Are Gaining Momentum

For years, lidar sensors and dense mapping were widely considered essential for safe automated driving. However, several industry trends are reshaping that assumption. Cameras have improved dramatically in dynamic range and low light performance. Automotive compute platforms have become capable of running complex neural networks in real time. Meanwhile, regulatory frameworks increasingly prioritise explainability rather than raw sensor redundancy.

Helm.ai’s approach focuses on a camera based perception system without dependency on high definition maps or lidar. That aligns with a growing segment of the industry that sees scalability as the decisive factor in autonomy deployment. Mapping each city individually has proven slow and expensive. Systems that interpret the environment directly offer a pathway to global deployment rather than local pilot zones.

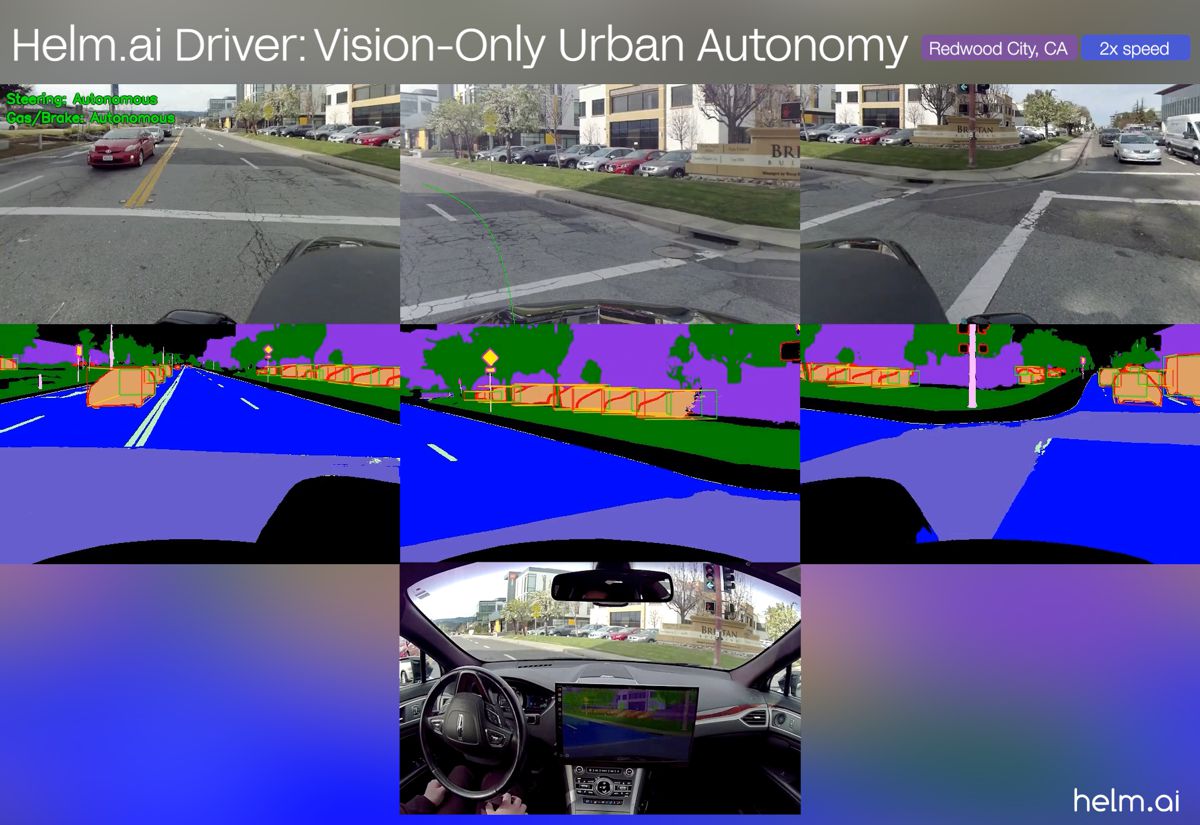

The company demonstrated the platform navigating intersections, traffic lights and urban interactions in Redwood City under safety supervision, reflecting standard testing practice across the sector. Such demonstrations are less about spectacle and more about validation that the architecture can manage routine complexity consistently, which remains the real barrier to certification rather than highway cruising.

Breaking the Autonomous Driving Data Wall

As automated systems improve, progress slows. Engineers call this the long tail problem. Rare scenarios dominate safety risk, yet collecting sufficient real world examples becomes exponentially expensive. Many developers now refer to this barrier as the data wall.

Traditional end to end neural driving models compound the issue. Because they translate raw pixels directly into control commands, they offer limited interpretability. Regulators require evidence of behaviour reasoning, not just performance statistics. A model that cannot explain why it acted a certain way struggles to satisfy certification requirements beyond supervised operation.

Helm.ai attempts to resolve both problems simultaneously through what it calls a factored embodied AI architecture. The autonomy stack separates perception from decision making. The perception stage converts sensor input into structured semantic understanding, including road geometry and object classification. The policy stage then reasons over that structured representation.

This separation enables auditing. Engineers can inspect what the system believed the world looked like before analysing its decision. From a certification perspective, that distinction is critical. Safety cases increasingly rely on traceability of behaviour rather than purely statistical validation.

From Driver Assistance to Eyes Off Operation

Level 2 assistance is already common across the industry, but Level 3 remains rare. The gap exists largely because of legal responsibility. Once the system is allowed to operate without constant driver supervision, manufacturers must demonstrate predictable behaviour under defined conditions.

A level agnostic architecture changes how that transition can be managed. Instead of rewriting software, companies can expand operational design domains. The same software brain evolves alongside sensors, compute capacity and regulation. That continuity simplifies validation because earlier deployments generate real world operational evidence.

According to Helm.ai leadership: “The industry has reached a tipping point where brute-force data collection is no longer commercially viable for high-end autonomy,” said Vladislav Voroninski, CEO and founder of Helm.ai. “With Helm.ai Driver, we have fundamentally changed the unit economics of scalable autonomy. By delivering a vision-first system that powers advanced Level 2+ today, and serves as the software brain for the transition to Level 3 and Level 4 autonomy, we are providing OEMs with the only realistic path to deploying next-generation autonomy on mass-market compute platforms.”

In practical terms, this reflects a shift from research prototypes to product planning. Carmakers need predictable upgrade paths rather than repeated platform resets.

Training Efficiency and the Role of Synthetic Learning

Autonomous driving datasets are famously expensive. Collecting, storing and manually labelling road scenarios costs vast sums, and rare events still remain scarce. Helm.ai addresses this through unsupervised learning methods and semantic simulation.

The company’s Deep Teaching technique allows neural networks to learn patterns from large volumes of non driving visual data. Instead of relying solely on annotated driving footage, the system extracts understanding from broader visual contexts. That reduces reliance on human labelling, which has historically been a major cost centre.

Semantic simulation complements this by modelling geometry rather than photorealistic imagery. Training the policy network on structured scene representation avoids the computational overhead of rendering millions of synthetic images. It also focuses learning on spatial reasoning rather than visual appearance.

The result, according to the company, is urban driving capability achieved with around one thousand hours of real world driving data. While independent validation will determine long term performance, the approach reflects a broader industry direction toward simulation assisted validation rather than pure mileage accumulation.

The Importance of Geographic Generalisation

Many autonomous deployments today remain geographically constrained. Vehicles operate within carefully mapped districts and struggle when moved elsewhere. For consumer vehicles, that limitation is commercially untenable. Drivers expect functionality everywhere, not just inside mapped zones.

Helm.ai demonstrated zero shot operation in a different Californian city without prior location specific training. The significance lies less in a single test and more in architectural philosophy. Systems that interpret rules and geometry rather than memorising environments are inherently portable.

Global scalability is crucial for manufacturers selling vehicles across continents. Regulatory approval in one region often depends on evidence that behaviour remains consistent in others. Eliminating city by city training reduces deployment cost and accelerates certification timelines.

Implications for the Automotive Supply Chain

If scalable camera based autonomy proves viable, its impact will extend beyond software developers. Sensor suppliers, mapping companies and compute vendors will all see shifting demand patterns. The industry has historically oscillated between sensor heavy redundancy and software centric intelligence. A successful vision first model could tilt investment toward compute efficiency and AI architecture rather than hardware complexity.

Infrastructure planning may also adapt. Instead of specialised connected corridors, authorities may prioritise clearer road markings and standardised signage that benefit both human and machine perception. That aligns with existing road safety initiatives aimed at improving visibility and consistency.

For policymakers, explainable autonomy architectures simplify regulation. Certification frameworks rely on traceable decision processes. Systems capable of demonstrating how they interpreted an environment and why they acted provide regulators with a clearer audit path than opaque neural control outputs.

A Gradual Path to Driverless Mobility

Fully driverless vehicles remain a long term objective rather than an immediate reality for most markets. However, incremental capability upgrades are already reshaping the driving experience. Advanced assistance reduces fatigue, improves safety margins and prepares users for higher autonomy levels.

The key question is not whether Level 4 vehicles will exist but how they will be deployed economically at scale. Platforms that evolve continuously rather than restarting at each autonomy level reduce risk for manufacturers and investors alike.

Helm.ai’s expanded Driver platform represents one interpretation of that path. By merging assistance and autonomy development into a single software progression, it reflects an industry transition from experimental robotics projects to long lifecycle consumer products. If the approach proves reliable under regulatory scrutiny, it could influence how future vehicles are architected from the outset.