NVIDIA Dynamo 1.0 Sets the Pace for Scalable AI Across Global Infrastructure

For years, the race in artificial intelligence revolved around training ever larger models. That phase, while still critical, is no longer the primary constraint. Today, the real pressure point sits firmly on inference, the moment when AI models are put to work in the real world, responding to queries, powering applications and driving decision making across industries.

As generative and agentic AI systems move from controlled pilot environments into full scale deployment, infrastructure demands have shifted dramatically. Data centres are no longer dealing with predictable workloads. Instead, they must handle bursts of complex, multimodal requests, often in real time, across globally distributed systems. For infrastructure operators, cloud providers and enterprise platforms, that introduces a new layer of operational complexity that can’t be solved with hardware alone.

NVIDIA has now introduced Dynamo 1.0, an open source software layer designed to orchestrate thid AI inference at scale. While the headline numbers focus on performance gains, the broader story is about control, efficiency and the evolution of AI infrastructure into something far more dynamic and industrialised.

From Hardware Power to Software Orchestration

The emergence of high performance GPUs such as those in the NVIDIA Blackwell platform has undeniably pushed the boundaries of computational capability. However, raw power without coordination often leads to inefficiencies. Idle cycles, memory bottlenecks and fragmented workloads can undermine even the most advanced hardware investments.

Dynamo 1.0 addresses this by acting as a distributed orchestration layer across GPU clusters. In effect, it behaves like an operating system for AI factories, coordinating compute, memory and data movement across thousands, potentially millions, of processing units. That shift mirrors the evolution seen in traditional computing, where operating systems transformed raw hardware into usable, scalable platforms.

The implications for infrastructure are significant. Instead of treating GPUs as isolated accelerators, Dynamo enables them to function as part of a coordinated system. Requests are dynamically routed, workloads are balanced in real time and memory is managed more intelligently, allowing data centres to extract far greater efficiency from existing resources.

Unlocking Performance Gains Where It Matters Most

One of the most striking aspects of Dynamo 1.0 is its reported ability to boost inference performance on NVIDIA Blackwell GPUs by up to seven times in certain scenarios. While benchmark figures always require context, the underlying principle is clear. Better orchestration leads to better utilisation, and better utilisation translates directly into economic value.

For operators running large scale AI infrastructure, performance improvements at the inference stage can dramatically reduce the cost per token. That, in turn, reshapes the economics of AI services, making them more viable for a wider range of applications, from real time logistics optimisation to predictive maintenance in construction and infrastructure networks.

Equally important is the impact on revenue potential. With more efficient inference, the same hardware footprint can support a higher volume of queries and services. For cloud providers and AI platforms, that effectively turns software optimisation into a revenue multiplier, without the need for additional capital expenditure on hardware.

Managing Complexity in Agentic AI Systems

Agentic AI introduces a further layer of complexity. Unlike traditional models that respond to single prompts, agentic systems operate through sequences of interactions, often maintaining context across multiple steps. This creates challenges in both memory management and data locality, as relevant information must be readily accessible across distributed systems.

Dynamo addresses this through a combination of intelligent routing and memory handling. Requests can be directed to GPUs that already hold relevant short term context, reducing the need to reload data and improving response times. When that context is no longer required, it can be offloaded to lower cost storage, freeing up high performance memory for other tasks.

This approach reflects a broader trend in infrastructure design, where the focus is shifting from static allocation to dynamic resource management. In industries such as transport and construction, similar principles are already being applied through smart traffic systems and adaptive asset management. The parallel is clear. AI infrastructure is beginning to adopt the same logic of responsiveness and efficiency.

Integration Across the Open Source Ecosystem

A key factor in the rapid adoption of Dynamo 1.0 lies in its integration with established open source frameworks. Rather than introducing a closed ecosystem, NVIDIA has embedded Dynamo and its TensorRT-LLM optimisations into widely used platforms such as LangChain, vLLM, SGLang, LMCache and llm-d.

This approach lowers the barrier to entry for developers and organisations looking to scale their AI capabilities. Existing workflows can be enhanced without the need for complete system overhauls, allowing teams to benefit from performance gains while maintaining flexibility.

Beyond integration, NVIDIA has also made core components of Dynamo available as standalone modules. Technologies such as advanced memory management systems, high speed GPU data transfer mechanisms and simplified scaling tools can be adopted independently, enabling a more modular approach to infrastructure development.

This aligns with a broader industry shift towards composable architectures, where systems are built from interoperable components rather than monolithic platforms. For construction and infrastructure stakeholders, the analogy is straightforward. Modular design enables faster deployment, easier upgrades and greater resilience over time.

Global Adoption Signals a Structural Shift

The scale of adoption for the NVIDIA inference platform highlights how quickly the industry is moving. Major cloud providers, including Amazon Web Services, Microsoft Azure, Google Cloud and Oracle Cloud Infrastructure, have integrated the platform into their offerings. At the same time, a growing network of specialised cloud partners and AI native companies are building services on top of it.

Enterprises across sectors are also engaging with the technology. From financial services and e commerce to digital platforms and logistics, the demand for scalable AI inference is becoming universal. Companies such as PayPal, Pinterest and ByteDance are leveraging these capabilities to deliver real time, personalised experiences to global user bases.

This level of adoption suggests that AI inference is no longer a niche capability. It is becoming a core layer of digital infrastructure, much like cloud computing did in the previous decade. For policymakers and investors, that signals a shift in where value is being created. The focus is moving from model development to operational deployment at scale.

Implications for Construction and Infrastructure

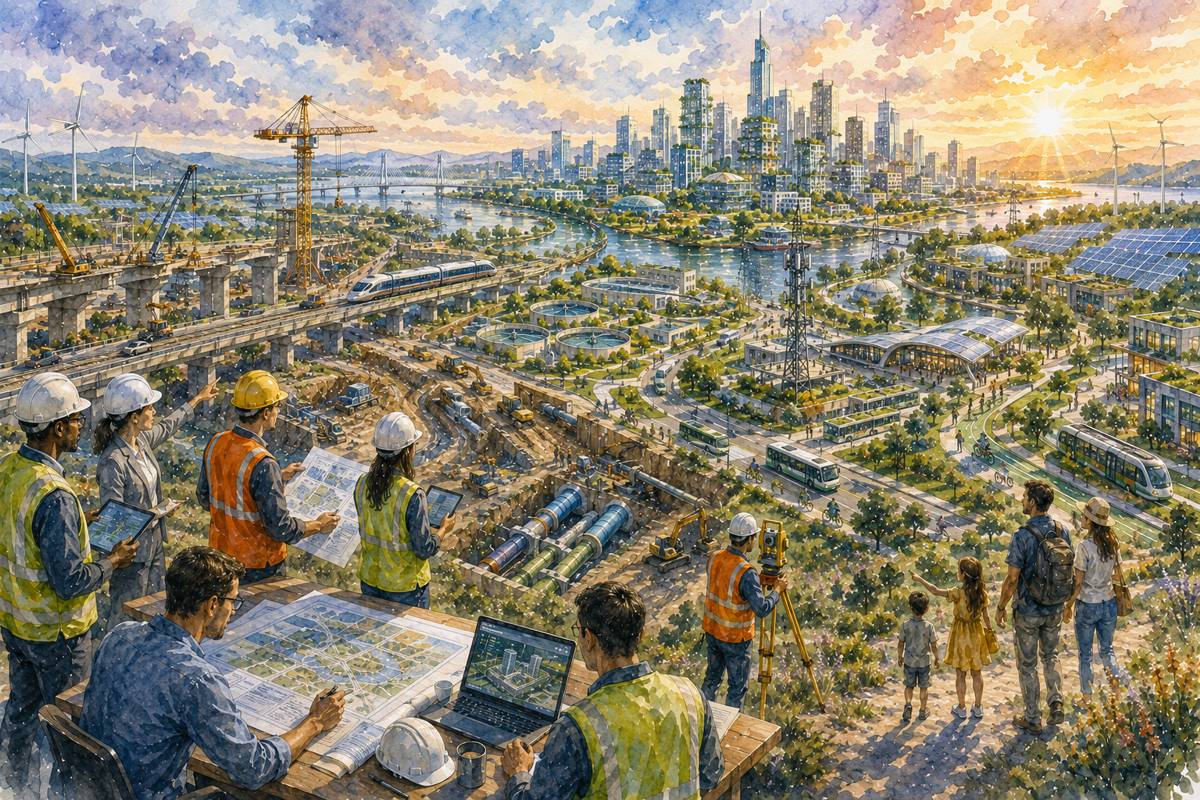

While the immediate applications of Dynamo 1.0 are rooted in data centres and cloud platforms, the ripple effects extend far beyond the technology sector. Construction, transport and infrastructure industries are increasingly reliant on real time data and AI driven decision making.

From autonomous construction equipment and smart traffic management systems to predictive maintenance of critical assets, the ability to process data efficiently at scale is becoming essential. Inference, rather than training, is what powers these real world applications. Faster, more efficient inference directly translates into safer, more responsive and more cost effective infrastructure systems.

Moreover, as infrastructure projects become more digitally integrated, the demand for scalable AI platforms will only increase. Digital twins, IoT networks and advanced analytics all rely on continuous streams of data that must be processed in real time. Technologies like Dynamo provide the backbone for these capabilities, enabling infrastructure systems to operate with greater intelligence and adaptability.

The Economics of Open Source AI Infrastructure

Another noteworthy aspect of Dynamo 1.0 is its open source nature. By making the software freely available, NVIDIA is accelerating adoption while shaping the direction of the broader ecosystem. This strategy mirrors the success of open source platforms in other areas of technology, where widespread collaboration has driven rapid innovation.

For businesses and developers, open source reduces the cost of experimentation and deployment. It allows organisations to build on proven foundations while customising solutions to their specific needs. At the same time, it fosters a competitive environment where performance and efficiency become key differentiators.

From an economic perspective, this approach also helps to expand the total addressable market for AI. Lower costs and improved performance make AI applications viable in sectors that may have previously been excluded due to budget constraints. For the construction and infrastructure industries, that opens the door to broader adoption of advanced technologies.

A Foundation for the Next Phase of AI Deployment

The introduction of Dynamo 1.0 marks a shift in how AI infrastructure is conceptualised and deployed. It is not simply about faster GPUs or more powerful models. It is about creating systems that can manage complexity, scale efficiently and deliver consistent performance under real world conditions.

As AI continues to permeate every aspect of industry, the importance of inference will only grow. The ability to deliver intelligence on demand, at scale and at a sustainable cost, will define the next generation of digital infrastructure.

NVIDIA’s approach, combining high performance hardware with open source orchestration software, provides a glimpse of how this future might take shape. It is a model that emphasises integration, efficiency and scalability, qualities that resonate strongly with the needs of modern infrastructure systems.