NVIDIA Spectrum X Powers the Next Wave of Gigascale AI Infrastructure

Artificial intelligence infrastructure has become the latest battleground in global technology development, and the pressure on networking systems is mounting fast. While much of the public conversation centres on GPUs and increasingly enormous large language models, the real challenge inside modern AI factories often comes down to moving colossal volumes of data without bottlenecks, delays or failures.

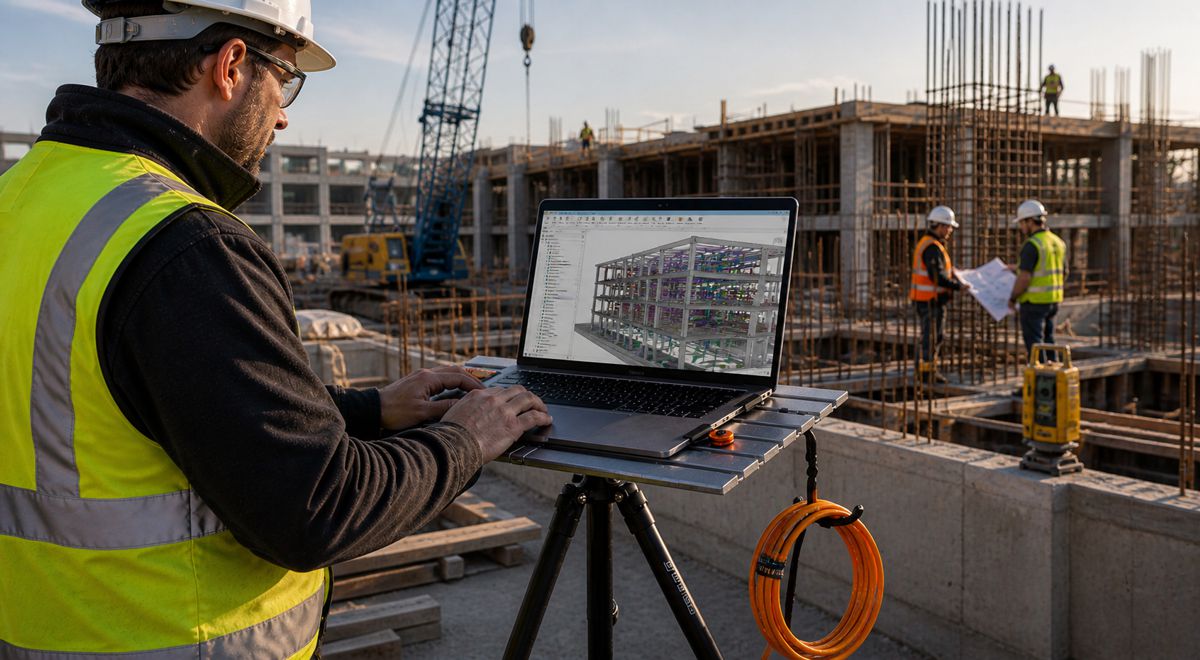

That’s where networking architecture has suddenly become mission critical. Training frontier AI models across tens of thousands of GPUs demands more than raw compute power. It requires ultra-fast, resilient and intelligent communication between systems operating simultaneously across vast data centre environments. Even a split-second disruption can leave thousands of expensive accelerators sitting idle, burning energy while productivity grinds to a halt.

NVIDIA is positioning its Spectrum-X Ethernet platform as a cornerstone technology for the next generation of AI factories. The company’s latest developments around Multipath Reliable Connection, known as MRC, reflect how rapidly the industry is evolving beyond conventional networking approaches as hyperscale operators race to scale AI systems into previously unimaginable territory.

The significance stretches far beyond Silicon Valley engineering circles. AI networking is quickly becoming strategic infrastructure, shaping the competitiveness of cloud providers, governments, industrial operators and enterprises investing in sovereign AI capabilities. Whoever masters high-performance AI fabrics effectively controls the speed, efficiency and economics of future AI development.

Briefing

- NVIDIA Spectrum-X Ethernet is being deployed in large-scale AI factories operated by OpenAI, Microsoft and Oracle.

- Multipath Reliable Connection (MRC) enables AI traffic to move across multiple network paths simultaneously for greater throughput and resilience.

- Hardware-accelerated failover and multiplanar network architecture help maintain synchronisation across thousands of GPUs.

- OpenAI says MRC helped reduce network slowdowns and interruptions during frontier AI training runs.

- NVIDIA and industry partners have released MRC as an open specification through the Open Compute Project.

AI Factories Are Creating a New Infrastructure Arms Race

The term “AI factory” has rapidly entered the vocabulary of hyperscale computing, and for good reason. These facilities differ fundamentally from traditional data centres. Rather than supporting general-purpose enterprise workloads, AI factories are purpose-built environments designed to train and deploy advanced AI models at extraordinary scale.

That shift changes the infrastructure equation completely. Conventional cloud networking architectures struggle when faced with synchronised GPU clusters exchanging enormous data volumes continuously during distributed AI training operations. As models grow from billions to trillions of parameters, network performance increasingly determines whether expensive compute resources are fully utilised or left waiting for data transfers to complete.

Industry analysts at firms such as Gartner and IDC have repeatedly highlighted networking as one of the emerging constraints in hyperscale AI expansion. Latency, congestion management, fault tolerance and energy efficiency are now central considerations for operators building frontier AI systems.

The scale is staggering. Microsoft’s Fairwater infrastructure project and Oracle Cloud Infrastructure’s Abilene data centre are among the facilities being developed specifically to handle frontier AI workloads. These environments are designed to support massive GPU deployments operating continuously under demanding conditions where downtime and inefficiency carry enormous financial implications.

Multipath Reliable Connection Changes the Traffic Flow

At the heart of NVIDIA’s latest networking push sits MRC, a transport protocol developed collaboratively with companies including OpenAI, Microsoft, AMD, Intel and Broadcom.

Traditionally, RDMA, or Remote Direct Memory Access, connections rely on a single network path between systems. MRC changes that model entirely by enabling a single connection to distribute traffic across multiple paths simultaneously.

The practical impact resembles redesigning a congested motorway into an interconnected urban grid where traffic can instantly reroute around disruptions. Instead of relying on one fixed route, AI workloads can dynamically shift data flows across multiple available pathways in real time.

That capability matters enormously at hyperscale. AI training operations rely on tightly synchronised GPU clusters where delays affecting one node can ripple across an entire workload. Maintaining balanced traffic distribution becomes essential for preserving throughput and avoiding costly slowdowns.

“Deploying MRC in the Blackwell generation was very successful and was made possible by a strong collaboration with NVIDIA,” said Sachin Katti, head of industrial compute at OpenAI. “MRC’s end-to-end approach enabled us to avoid much of the typical network-related slowdowns and interruptions and maintain the efficiency of frontier training runs at scale.”

The comments offer a rare glimpse into the operational realities facing leading AI developers. Frontier model training is no longer simply about deploying more GPUs. The surrounding infrastructure ecosystem now plays an equally decisive role.

Networking Failures Can Derail Entire AI Training Runs

Large-scale AI systems are remarkably sensitive to interruptions. In conventional enterprise environments, brief network slowdowns might go unnoticed. In AI training clusters involving thousands of GPUs operating in parallel, however, disruptions can cascade rapidly. That’s why NVIDIA’s emphasis on hardware-level resilience has become increasingly important.

Spectrum-X Ethernet incorporates failure bypass technology capable of detecting path failures and rerouting traffic within microseconds. Rather than waiting for software-based recovery mechanisms, the system responds directly at hardware speed to maintain continuity across the network fabric.

For operators managing multi-billion-dollar AI investments, that capability carries substantial economic weight. GPU idle time represents lost productivity, wasted power consumption and delayed training schedules. Maintaining high GPU utilisation rates has therefore become one of the key performance metrics in modern AI infrastructure.

The networking challenge becomes even more pronounced as systems scale toward hundreds of thousands of GPUs. Synchronisation requirements intensify while the probability of transient failures naturally increases within such enormous environments.

MRC addresses this through intelligent retransmission capabilities designed to recover rapidly from packet loss while minimising disruption to long-running AI jobs. Combined with dynamic load balancing, the system attempts to sustain bandwidth performance even under congested conditions.

Multiplanar Networks Push Scalability Further

Another important development emerging from these deployments involves multiplanar networking architectures.

OpenAI is among the organisations deploying multiplane network designs alongside Spectrum-X Ethernet and MRC. In practical terms, a multiplane architecture creates multiple independent network fabrics, each capable of serving as an alternative communication path between GPUs.

This approach improves both resilience and scalability. If one network plane encounters congestion or disruption, workloads can continue operating across alternative paths without bringing the broader training environment to a halt.

NVIDIA says its Spectrum-X Multiplane capability provides hardware-accelerated load balancing across these planes, helping maintain predictable latency while supporting massive AI environments.

That predictability matters enormously for distributed AI training. Variations in latency between nodes can create synchronisation issues that degrade overall performance. Maintaining consistent communication timing across thousands of interconnected GPUs becomes just as important as raw bandwidth figures.

The broader industry trend is clear. AI networking is evolving from traditional hierarchical data centre designs toward increasingly intelligent, adaptive and software-aware fabrics built specifically around AI workload behaviour.

Open Standards Are Becoming Increasingly Important

One notable aspect of the MRC announcement is the decision to release the protocol specification through the Open Compute Project.

That move reflects growing pressure across the industry for open infrastructure standards capable of supporting increasingly heterogeneous AI ecosystems.

While NVIDIA remains dominant in AI acceleration hardware, hyperscale operators and cloud providers are simultaneously seeking flexibility, interoperability and protection against vendor lock-in. Open standards can help operators integrate technologies across broader infrastructure environments while accelerating adoption.

The collaboration itself also highlights how competitive dynamics within AI infrastructure are shifting. Despite intense rivalry in semiconductors and cloud computing, companies including AMD, Intel, Broadcom, Microsoft, OpenAI and NVIDIA are cooperating on foundational infrastructure technologies needed to support the broader AI economy.

That cooperation is partly pragmatic. The engineering complexity involved in scaling AI systems has become so immense that infrastructure interoperability increasingly benefits the entire ecosystem.

Ethernet Is Challenging Traditional AI Networking Assumptions

For years, InfiniBand dominated high-performance computing environments requiring low latency and ultra-fast interconnects. NVIDIA’s Spectrum-X strategy reflects a broader industry effort to push Ethernet deeper into high-performance AI infrastructure.

Ethernet’s advantages include familiarity, ecosystem maturity and widespread deployment across global data centre environments. By enhancing Ethernet with AI-specific intelligence, adaptive routing and RDMA optimisation, vendors hope to combine scalability with operational flexibility.

Spectrum-X Ethernet supports both Adaptive RDMA and MRC transport models while also accommodating custom protocols. That flexibility gives operators greater freedom to tailor networking approaches around specific workload requirements.

The strategy aligns with growing demand for composable infrastructure where compute, storage and networking resources can be dynamically orchestrated according to changing AI workloads.

As AI systems continue expanding into industrial automation, autonomous transport, robotics, infrastructure monitoring and digital twin environments, networking flexibility may become just as valuable as raw performance.

The Infrastructure Behind AI Is Becoming Strategic

Much of the AI conversation still focuses on software breakthroughs and increasingly sophisticated models, yet the underlying infrastructure race may ultimately prove just as consequential.

Countries, hyperscalers and enterprises are now investing billions into AI factories that depend on stable, scalable and resilient networking fabrics capable of sustaining enormous computational loads continuously.

For construction, infrastructure and industrial technology sectors, the implications are substantial. AI-driven digital twins, intelligent transport systems, autonomous machinery, predictive maintenance platforms and smart infrastructure management all depend on scalable computing ecosystems operating behind the scenes.

Networking platforms such as Spectrum-X Ethernet are becoming foundational layers within that broader digital transformation.

As AI infrastructure expands globally, the winners may not simply be those with the fastest chips or largest models. Increasingly, success could hinge on who can build the most efficient, reliable and scalable infrastructure ecosystems capable of keeping vast AI factories running smoothly around the clock.