Powering AI Anywhere with Modular Edge Infrastructure

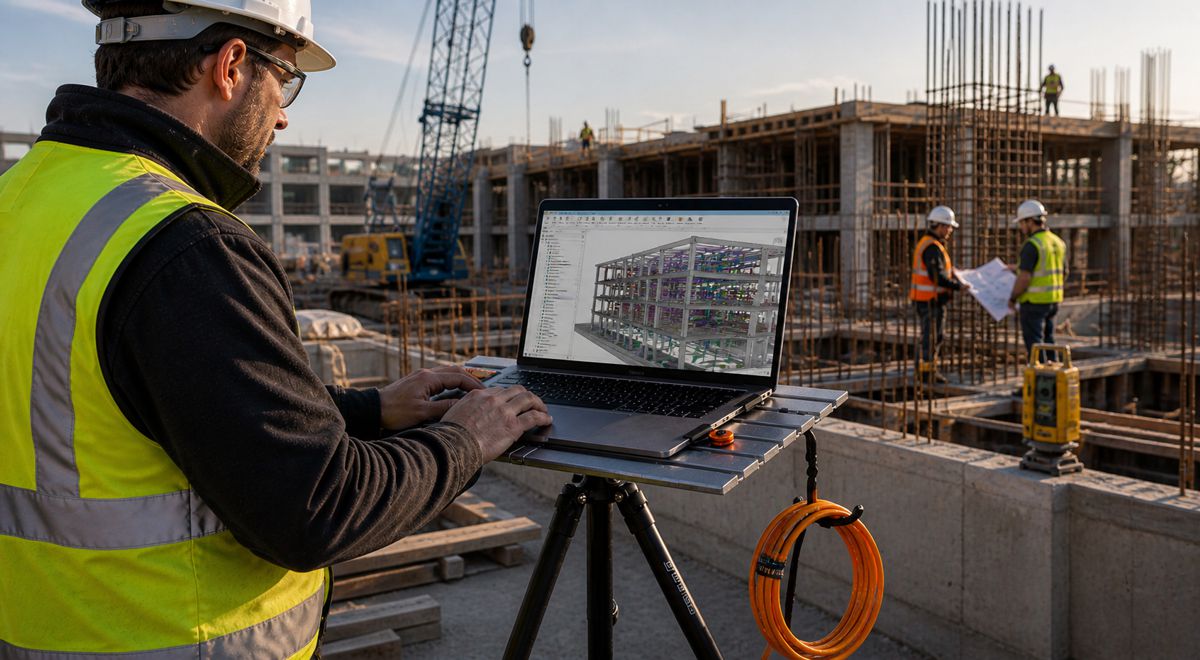

The rapid rise of artificial intelligence is reshaping how industries operate, but it’s also exposing a fundamental constraint. For all the progress in cloud computing, much of the world’s most valuable data is generated far from traditional data centres. Whether on remote construction sites, offshore energy platforms, busy ports or sprawling telecom networks, the challenge remains the same: how to process vast volumes of data where it’s created, rather than shipping it back to centralised facilities.

A new strategic collaboration between ZEDEDA and Submer signals a decisive shift in how the industry is tackling that problem. By combining modular infrastructure with advanced cooling and orchestration software, the partnership aims to bring high-density AI computing directly into operational environments where conventional data centres simply can’t go.

At a time when latency, resilience and sustainability are becoming critical metrics for infrastructure investment, this move reflects a broader transformation across construction, transport and industrial sectors. AI is no longer confined to the cloud. It’s moving into the physical world, and the infrastructure is finally catching up.

The Shift from Centralised Cloud to Edge Intelligence

For years, cloud computing has dominated the AI landscape, offering scalable processing power in highly controlled environments. Yet, as industries digitise their operations, the limitations of this model are becoming increasingly apparent. Transmitting data from remote or high-risk locations to centralised data centres introduces latency, bandwidth costs and, in some cases, regulatory complications.

Edge computing offers an alternative by processing data locally, closer to its source. According to research from Gartner, a significant proportion of enterprise-generated data is expected to be created and processed outside traditional data centres and cloud environments. That shift is particularly relevant for infrastructure-heavy industries, where real-time decision-making can directly impact safety, efficiency and profitability.

In practical terms, this means AI must operate on factory floors, inside transport networks and across energy infrastructure. These environments often lack the physical space, cooling capacity or connectivity required for traditional data centre deployments. Bridging that gap requires a fundamentally different approach to infrastructure design.

Modular Infrastructure Built for the Real World

The joint solution developed by ZEDEDA and Submer centres on modular, manufacturable units designed for rapid deployment in challenging environments. Rather than building permanent facilities, organisations can deploy self-contained AI infrastructure tailored to specific operational needs.

Three primary configurations are planned, each targeting different scales of deployment:

- Pods designed for compact edge installations such as telecom sites or industrial facilities

- Packs functioning as ruggedised micro-data centres for sectors like mining, ports and manufacturing

- Containers delivering large-scale, megawatt-level AI infrastructure suitable for sovereign deployments or GPU-as-a-service operations

These modular systems are engineered to support high-density GPU workloads, with configurations capable of exceeding 100kW per rack. That level of compute density is essential for running advanced AI applications, including computer vision, predictive maintenance and increasingly complex autonomous systems.

Crucially, the modular approach reduces deployment timelines and avoids the lengthy planning, permitting and construction processes typically associated with data centres. For industries operating on tight project schedules or in remote locations, that flexibility could prove transformative.

Liquid Cooling Unlocks High-Density AI

One of the most significant barriers to deploying AI infrastructure outside traditional facilities is heat management. GPU-intensive workloads generate enormous amounts of thermal output, and conventional air-cooling systems struggle to cope, particularly in harsh or space-constrained environments.

Submer’s liquid cooling technologies address this challenge by using immersion and direct-to-chip cooling methods. These approaches allow systems to operate at far higher densities while maintaining efficiency and reliability. Compared to traditional air-cooled infrastructure, liquid cooling can significantly reduce energy consumption and eliminate the need for water-based cooling systems.

From an infrastructure perspective, this shift has wide-ranging implications. Energy efficiency is no longer just an environmental concern. It directly impacts operating costs and deployment feasibility, especially in remote or power-limited environments. By lowering cooling requirements and improving power usage effectiveness, liquid-cooled systems enable AI infrastructure to operate in places that would otherwise be off-limits.

Software Defined Resilience Changes the Economics

Beyond the hardware innovations, the partnership introduces a software-led approach to resilience that challenges conventional thinking. Traditional high-availability systems rely heavily on redundant hardware, which can be both costly and inefficient, particularly when scaled across distributed sites.

ZEDEDA’s orchestration platform takes a different route by managing resilience at the software level. Instead of duplicating hardware, the system detects failures and redistributes workloads dynamically across available resources. This approach improves utilisation, reduces excess capacity and lowers total cost of ownership.

“As intelligence moves from the cloud into the physical world, the ability to run AI anywhere — in a remote factory, an offshore platform, or telecommunications networks — is a fundamental requirement. The world’s most critical operations generate enormous volumes of data far from any data center, and until now, the infrastructure to act on that data intelligently simply couldn’t follow. Our collaboration with Submer makes that possible now,” said Said Ouissal, CEO and founder of ZEDEDA.

The implications extend beyond cost savings. Software-defined resilience also simplifies deployment, making it easier to scale infrastructure across multiple locations without the complexity traditionally associated with distributed systems.

Enabling AI Across Industrial and Infrastructure Sectors

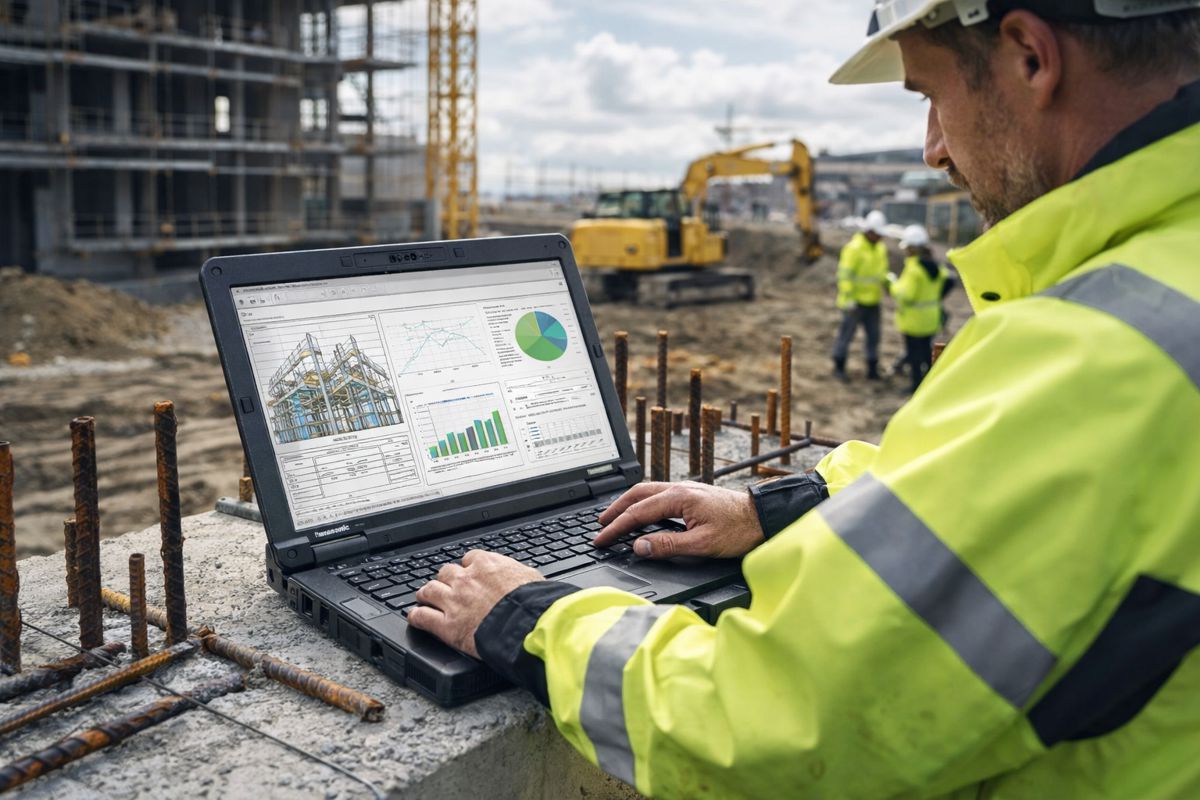

The real value of this development lies in its application across industries that depend on real-time data. In construction, for instance, AI-powered computer vision systems can monitor site safety, track progress and optimise workflows. Deploying these capabilities at the edge reduces latency and ensures continuous operation, even in areas with limited connectivity.

In the energy sector, predictive maintenance models rely on analysing equipment data in real time to prevent failures. Offshore platforms and remote installations stand to benefit significantly from localised AI processing, where connectivity to centralised systems may be unreliable or costly.

Transport and logistics networks present another compelling use case. With increasing pressure to optimise efficiency and reduce emissions, AI-driven decision-making is becoming essential. Edge deployments enable faster response times and more granular control across distributed networks, from ports to rail systems and road infrastructure.

“AI is rapidly moving from centralized cloud environments into real-world operations, from industrial sites to telecom networks and remote energy infrastructure. Delivering that intelligence requires purpose-built AI infrastructure that operates efficiently in environments where traditional data centers simply cannot exist. By combining Submer’s liquid-cooled high-density AI infrastructure with ZEDEDA’s edge intelligence platform, we’re enabling organizations to deploy scalable, resilient AI infrastructure anywhere it is needed,” said Patrick Smets, CEO of Submer.

Sustainability and the Future of AI Infrastructure

Sustainability is becoming a defining factor in infrastructure investment decisions, and AI is no exception. Data centres are already under scrutiny for their energy consumption, and as AI workloads grow, so too does their environmental impact.

Submer’s approach addresses this concern by reducing energy use and eliminating direct water consumption. The company reports significant improvements in power efficiency compared to traditional air-cooled systems, alongside measurable reductions in carbon emissions. While exact performance will vary depending on deployment conditions, the direction of travel is clear.

For governments and organisations pursuing net-zero targets, these efficiencies are not just desirable but increasingly necessary. The ability to deploy AI infrastructure that aligns with sustainability goals could accelerate adoption across sectors that have traditionally been cautious about large-scale digital transformation.

A New Model for AI Deployment

The collaboration between ZEDEDA and Submer reflects a broader industry trend towards decentralised, flexible infrastructure. Rather than building ever-larger centralised facilities, organisations are beginning to distribute compute resources closer to where they are needed most.

This shift has implications for infrastructure planning, investment strategies and even regulatory frameworks. Sovereign AI initiatives, for example, require localised processing capabilities to ensure data security and compliance. Modular edge infrastructure offers a practical pathway to achieving those objectives without the need for extensive new construction.

Pilot deployments are expected to begin later this year, with initial focus on industrial and telecommunications customers. While it remains early days, the potential impact is significant. By enabling high-performance AI in previously inaccessible locations, this approach could reshape how industries think about digital infrastructure.

Unlocking Intelligence Where It Matters Most

The move towards edge AI is not just a technological evolution. It represents a shift in how value is created across the global infrastructure ecosystem. Data is no longer an abstract asset stored in distant servers. It is a real-time resource generated at the heart of operations.

By combining modular design, advanced cooling and intelligent orchestration, ZEDEDA and Submer are addressing one of the most pressing challenges in modern infrastructure. The ability to deploy AI anywhere, at any scale, has the potential to unlock efficiencies, improve safety and drive innovation across industries.

For construction professionals, investors and policymakers, the message is clear. The future of AI will not be built solely in hyperscale data centres. It will be distributed, embedded and deeply integrated into the physical world. And with solutions like this, that future is arriving faster than many expected.